David M. Friscia’s Homepage

There are three great virtues of a programmer; Laziness, Impatience, and Hubris .

Professional Experience

Professional Organizations

| Environment | Languages | Hardware | Tools |

Mainframe |

Cobol/Cobol II |

IBM 360 / 370 |

TSO / Micro Focus Cobol |

Mini |

RPG/400 CL | IBM System 38 AS/400 DEC Alpha Sun450 |

SEU / SAD |

Desktop Networks |

ANSI-SQL MS-Transact SQL MS-Access MS-Visual Basic Omni-Trans Interchange Format C PERL PowerBuilder XML COM (ActiveX) Objects ADO (ActiveX Data Objects) Active Server Pages HTML DHTML JavaScript VBScript Java Java Servlets and Java Server Pages |

WLAN LAN Networks |

MS-Visual Studio MS SQL / Sybase SQL Erwin ERX Rational Rose Enterprise & ClearCase Estimate Professional IEEE Software Risk Management Drumbeat 2000 eXcelon 2.0 XML Spy |

2125032ATP

2125032CFII

2224328GII

Universal Constant

There Is Nothing Permanent Except Change — The Only Thing That Is Constant Is Change

Heraclitus of Ephesus (Ἡράκλειτος, Herakleitos; c. 535 BC – 475 BC) was a Greek philosopher, known for his doctrine of change being central to the universe, and for establishing the term Logos (λόγος) in Western philosophy as meaning both the source and fundamental order of the Cosmos.

Mathematics

There are two main reasons mathematics has fascinated humanity for two thousand years. First, math gives us the tools we need to understand the universe and build things. Second, the study of mathematical objects themselves can be beautiful and intriguing, even if they have no apparent practical applications. What's truly amazing is that sometimes a branch of math will start out as something completely abstract, with no immediate scientific or engineering applications, and then much later a practical use can be found.

Classic Mathematicians

Mixing religious mysticism with philosophy, the classics nature led them to explorations of basic mathematical ideas. Mathematics is an increasingly central part of our world and an immensely fascinating realm of thought. Generations of brilliant minds built up the basic mathematical ideas and tools that sit at the foundation of our understanding of math and its relationship to the world.

Euclid

Archimedes

Al-Khwarizmi

Napier

Kepler

Pascal

Newton

Leibniz

Bayes

Euler

The Only Numbers You Need To Do Math

There are infinitely many numbers, and infinitely many ways to combine and manipulate those numbers.

Mathematicians often represent numbers in a line. Pick a point on the line, and this represents a number.

At the end of the day, though, almost all of the numbers that we use are based on a handful of extremely important numbers that sit at the foundation of all of math.

There are the eight numbers you actually need to build the number line, and to do just about anything quantitative.

One

Negative One

One Tenth

Pi

Euler's Number, e

Square Root of -1: i

entropy

n., pl. entropies. -- entropic adj. -- entropically adv.Rudolf Clausius (1822 – 1888) is the originator of the concept of entropy. Clausius described entropy as the transformation-content. This was in contrast to earlier views, based on the theories of Isaac Newton, that heat was an indestructible particle that had mass.

Entropy is a measure of the number of specific ways in which a system (Cosmos) may be arranged, often taken to be a measure of disorder.

The entropy of an isolated system never decreases, because isolated systems spontaneously evolve towards thermodynamic equilibrium, which is the state of maximum entropy. Entropy was originally defined for a thermodynamically reversible process as [ Delta S = int frac {dQ_{rev}}T ] ![]() where the entropy (S) is found from the uniform thermodynamic temperature (T) of a closed system divided into an incremental reversible transfer of heat into that system (dQ).

where the entropy (S) is found from the uniform thermodynamic temperature (T) of a closed system divided into an incremental reversible transfer of heat into that system (dQ).

The above definition is sometimes called the macroscopic definition of entropy because it can be used without regard to any microscopic picture of the contents of a system. In thermodynamics, entropy has been found to be more generally useful and it has several other formulations. Entropy was discovered when it was noticed to be a quantity that behaves as a function of state. Entropy is an extensive property, but it is often given as an intensive property of specific entropy as entropy per unit mass or entropy per mole.

In the modern microscopic interpretation of entropy in statistical mechanics, entropy is the amount of additional information needed to specify the exact physical state of a system, given its thermodynamic specification. The role of thermodynamic entropy in various thermodynamic processes can thus be understood by understanding how and why that information changes as the system evolves from its initial condition. It is often said that entropy is an expression of the disorder, or randomness of a system, or of our lack of information about it (which on some views of probability, amounts to the same thing as randomness). The second law is now often seen as an expression of the fundamental postulate of statistical mechanics via the modern definition of entropy.

Although the concept of entropy was originally a thermodynamic construct, it has been adapted in other fields of study, including information theory, psychodynamics, thermoeconomics/ecological economics, and evolution.

The three nested systems of sustainability - the economy wholly contained by society, wholly contained by the biophysical environment.

One of the first major applications of Boolean algebra came from the 1937 master's thesis of Claude Shannon, one of the most important mathematicians and engineers of the 20th century. Shannon realized that switches in relay networks, like in a telephone network, or an Shannon's innovation made the design of switch networks vastly easier: rather than needing to actually play around with network connections themselves, the techniques developed by Boole and his successors provided a mathematical framework allowing for more efficient network layouts.

I thought of calling it 'information', but the word was overly used, so I decided to call it 'uncertainty'. [...] Von Neumann told me, 'You should call it entropy, for two reasons. In the first place your uncertainty function has been used in statistical mechanics under that name, so it already has a name. In the second place, and more important, nobody knows what entropy really is, so in a debate you will always have the advantage.'

Conversation between Claude Shannon and John von Neumann regarding what name to give to the attenuation in phone-line signals.

When viewed in terms of information theory, the entropy state function is simply the amount of information (in the Shannon sense) that would be needed to specify the full microstate of the system. This is left unspecified by the macroscopic description.

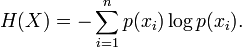

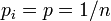

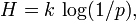

In information theory, entropy is the measure of the amount of information that is missing before reception and is sometimes referred to as Shannon entropy. Shannon entropy is a broad and general concept which finds applications in information theory as well as thermodynamics. It was originally devised by Claude Shannon in 1948 to study the amount of information in a transmitted message. The definition of the information entropy is, however, quite general, and is expressed in terms of a discrete set of probabilities  :

:

.

. In the case of transmitted messages, these probabilities were the probabilities that a particular message was actually transmitted, and the entropy of the message system was a measure of the average amount of information in a message. For the case of equal probabilities (i.e. each message is equally probable), the Shannon entropy (in bits) is just the number of yes/no questions needed to determine the content of the message.

The question of the link between information entropy and thermodynamic entropy is a debated topic. While most authors argue that there is a link between the two, a few argue that they have nothing to do with each other.

The expressions for the two entropies are similar. The information entropy H for equal probabilities  is

is  where k is a constant which determines the units of entropy. There are many ways of demonstrating the equivalence of "information entropy" and "physics entropy", that is, the equivalence of "Shannon entropy" and "Boltzmann entropy". Nevertheless, some authors argue for dropping the word entropy for the H function of information theory and using Shannon's other term "uncertainty" instead.

where k is a constant which determines the units of entropy. There are many ways of demonstrating the equivalence of "information entropy" and "physics entropy", that is, the equivalence of "Shannon entropy" and "Boltzmann entropy". Nevertheless, some authors argue for dropping the word entropy for the H function of information theory and using Shannon's other term "uncertainty" instead.

The entropy of a black hole is given by the equation: ![]()

where S is the entropy,

c is the speed of light,

k is Boltzmann's constant,

A is the surface area of the event horizon,

h (“h-bar”) is the reduced Planck's Constant (or Dirac's Constant) and

G is the gravitational constant. (Hawking's Paradox)

Sometimes, developments in pure math can, decades or centuries later, turn out to have amazing applications.

Remarkably, about a century after Boole's initial investigations, mathematicians and scientists discovered an extremely powerful set of applications for formal logic, and now this apparently abstract mathematical and logical tool is at the heart of the global economy.

Boolean algebra, and other forms of abstract propositional logic, are based on dealing with compound propositions made up of simple propositions joined by logical connectors like "and", "or", and "not". Boolean algebra — taking true and false values, manipulating them according to logical rules, and coming up with appropriate true and false results — is the fundamental basis of the modern digital computer.

EXISTENCE IS PROBLEMATIC.

To be human is characterised by an existence that precedes its essence. To Sartre, "existence precedes essence" means that a personality is not built over a previously designed model or a precise purpose, because it is the human being who chooses to engage in such enterprise. ... It is this overtaking of a present constraining situation by a project to come that Sartre names transcendence.

Jean-Paul Charles Aymard Sartre 1964 Nobel Prize in Literature

Words To Live By

- When one door closes and another door opens, you are probably in prison.

- To me, "drink responsibly" means don't spill it.

- When I say, "The other day," I could be referring to any time between yesterday and 15 years ago.

- Interviewer: "So, tell me about yourself." Me: "I'd rather not. I kinda want this job."

- Cop: "Please step out of the car." Me: "I'm too drunk. You get in."

- I remember being able to get up without making sound effects.

- I had my patience tested. I'm negative.

- Remember, if you lose a sock in the dryer, it comes back as a Tupperware lid the doesn't fit any of your containers.

- If you're sitting in public and a stranger takes the seat next to you, just stare straight ahead and say "Did you bring the money?"

- When you ask me what I am doing today, and I say "nothing," it does not mean I am free. It means I am doing nothing.

- Age 60 might be the new 40, but 9:00 is new midnight.

- I finally got eight hours of sleep. It took me three days, but whatever.

- I run like the winded.

- I hate when a couple argues in public, and I missed the beginning and don't know whose side I'm on.

- When someone asks what I did over the weekend, I squint and ask, "Why, what did you hear?"

- I don't remember much from last night, but the fact that I needed sunglasses to open the fridge this morning tells me it was awesome.

- When you do squats, are your knees supposed to sound like a goat chewing on an aluminum can stuffed with celery?

- I don't mean to interrupt people. I just randomly remember things and get really excited.

- When I ask for directions, please don't use words like "east."

- It's the start of a brand new day, and I'm off like a herd of turtles.

- Don't bother walking a mile in my shoes. That would be boring. Spend 30 seconds in my head. That'll freak you right out.

- That moment when you walk into a spider web suddenly turns you into a karate master.

- Sometimes, someone unexpected comes into your life outta nowhere, makes your heart race, and changes you forever. We call those people cops.

- The older I get, the earlier it gets late.

- My luck is like a bald guy who just won a comb.

Self-Diagnosis For Men

A simplified urine test that may be relevant for you old guys

- Go outside and pee in the garden.

- If ants gather:- diabetes.

- If you pee on your feet:- prostate.

- if it smells like a barbecue:- cholesterol.

- if when you shake it, your wrist hurts:- osteoarthritis.

- if you return to your room with your penis outside your pants:- Alzheimer’s

To err is human.... to really screw up, you need a computer...

Murphy's Law ("If anything can go wrong, it will") was born at Edwards Air Force Base in 1949 at North Base.

It was named after Capt. Edward A. Murphy, an engineer working on Air Force Project MX981,

(a project) designed to see how much sudden deceleration a person can stand in a crash.

One day, after finding that a transducer was wired wrong, he cursed the technician responsible and said, "If there is any way to do it wrong, he'll find it."

The contractor's project manager kept a list of "laws" and added this one, which he called Murphy's Law.

Vanity of vanities, meaningless, all things under the sun are vanity of vanities and a chasing of the wind. . . Ecc.1:14, 2:11

"I’ve given it a lot of thought, I’d rather be shallow and rich!"

"Reality is Particles and Forces, everything else is personal Opinion and Conjecture."

"I was born for this, I came into the world for this: to bear witness to the truth;

and all who are on the side of truth listen to my voice."

"Truth?" said Pilate, "What is that?" John 18:37

Catonis Monosticha [Cannon Of Morals] - anglice et latine producta--quibus nihil discipulis aptius--vel Disticha.

Commercial Intercourse Decorum which includes:

Everything Worth Knowing is Known In Corporate America,

The Rhetoric of Ideas and Information Exchange In Corporate America

and,

Generation X Office Lingo